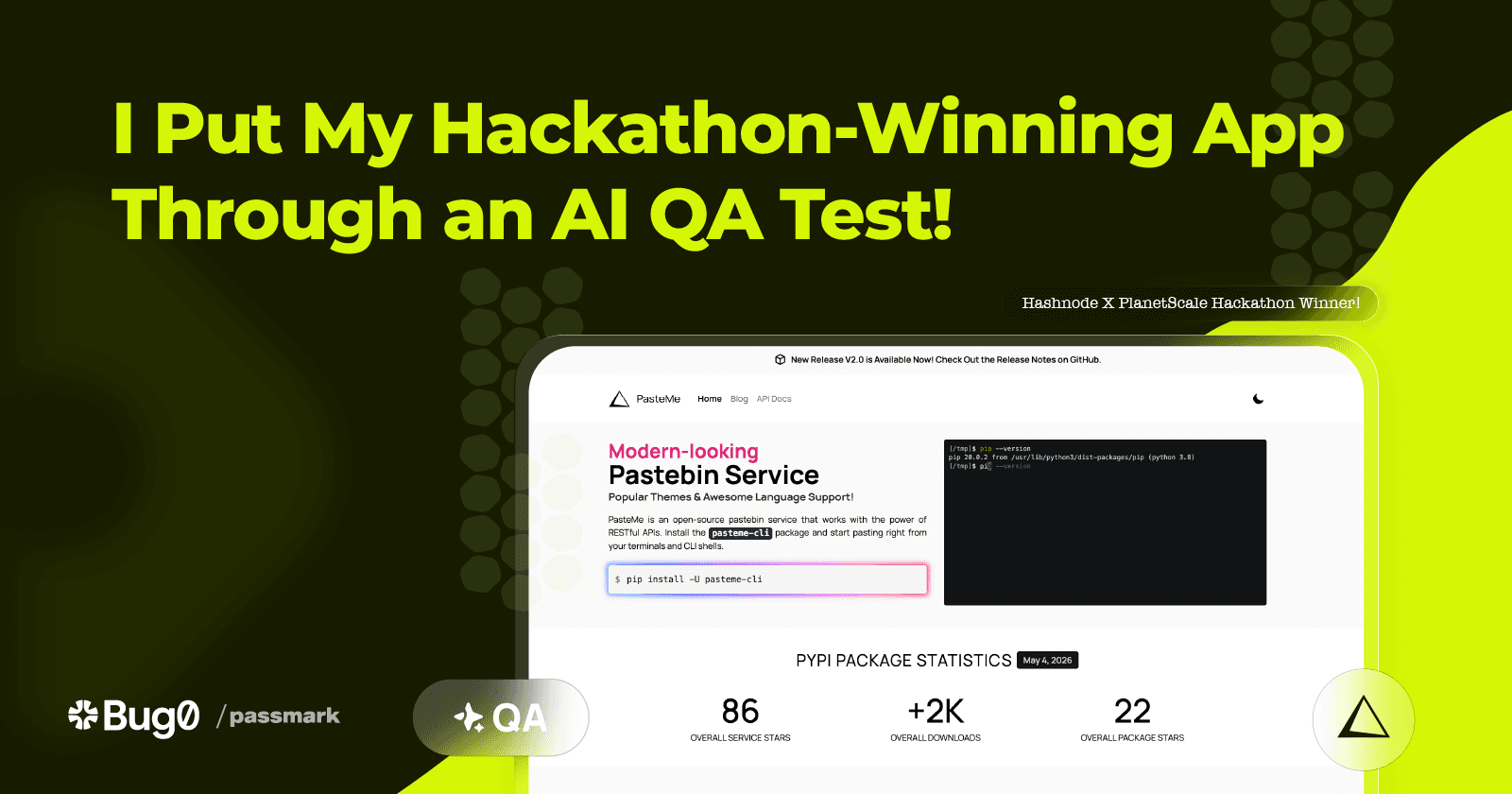

I Put My Hackathon-Winning App Through an AI QA Test — The Results Surprised Me!

I tested my Hashnode hackathon-winning project using a complex CLI-integrated Passmark test case.

A few years back, I took part in the HashnodeXPlanetScale hackathon. Our task was to develop services using the SQL databases provided by PlanetScale.

I created PasteMe, a sleek open-source pastebin that allows you to upload code snippets directly from your CLI to the cloud with just a single command in the terminal.

Fortunately, it won the hackathon, and this time, I thought it would be interesting to see if it truly held up.

Pastebin Services

A pastebin service is essentially a cloud platform that stores your code snippets, highlights and formats them, and dedicates a web page for the viewers.

What is essentially pasted?

Users paste configurations, buggy codes, solutions, refactored codes, and just about anything they want to share.

PasteMe employs a RESTful solution, allowing developers and users to upload code snippets directly from their CLI.

The Flow of PasteMe

What sets this testing apart from other web services is PasteMe's unique operation. The website consists of three main pages: the Homepage, blogs, and API docs. Additionally, a RESTful service operates behind the scenes to handle the pasting functionality.

I also developed a Python SDK package (essentially a CLI utility), allowing users to interact with the endpoints in a more user-friendly and visually appealing way.

Users install the pasteme-cli package in the terminal and then use the pasteme command to paste code as follows:

$ pasteme app.js

(¬_¬) -> https://pasteme.pythonanywhere.com/paste/2abb4

When you visit the link, you'll see:

There are some options that pasteme-cli provides such as:

Title (

-t): The snippet title defaults to "Untitled".Expiry time (

-x): Options include 1 day, 1 week, or 1 month.Theme (

-T): All Atom and GitHub themes are available.

PasteMe Tech Stacks

The backend utilizes Django and the Django Rest Framework (DRF), with PlanetScale PostgreSQL powering the database. For the front end, I employed Django's native template engine along with Tailwind. The project is hosted and served on Pythonanywhere.

It's CLI-Integrated

In the past, I've conducted extensive testing when writing Python codes. Written a lot of articles about testing. I enjoy writing test cases, and there's something satisfying about seeing those green checkmarks that confirm all tests have passed through the CI pipelines. However, sometimes having those green checkmarks doesn't guarantee the 100% of reliability and robustness of the code.

Testing PasteMe is different. It doesn't involve fully comprehensive web-based "Playwright" testing. Instead, it's integrated with the CLI, so the changes made through the CLI drive the interactions and the look of the website.

Here's how I see it: The testing flow involves pushing a code snippet to PasteMe with specific parameters set, then checking the URL to ensure the view aligns with the request.

Parameterized Passmark Testing

Parameterized testing involves executing a single test case with various sets of inputs. Here's a simple mock data setup for testing:

export const snippets: Snippet[] = [

{

title: 'Hello World in JavaScript',

body: "console.log('Hello, world!');",

language: 'js',

expiriesIn: 7,

theme: 'github',

assertion:

'a JavaScript code snippet in light theme, available for one week.'

},

{

title: 'Simple Python Function',

body: `def greet(name):\n\r return f"Hello, {name}!"`,

language: 'python',

expiriesIn: 30,

theme: 'atom-one-dark',

assertion:

'a Python greeting function in Atom One Dark theme, available for one month.'

},

{

title: 'Basic Bash Script',

body: `#!/bin/bash echo "Starting script..." date`,

language: 'bash',

expiriesIn: 1,

theme: 'dark',

assertion:

'a Bash echoing script in dark theme, available for one day.'

}

];

The objective is to run the same test case with different inputs and expectations. We start by making the API call, retrieve the snippet ID to access the page, and then verify the visual output.

Here is my test case:

import { test, expect } from "@playwright/test";

import { runSteps } from "passmark";

import {snippets} from "../data/mock";

import {API_URL, BASE_URL} from "../constant";

test("View code snippets", async ({ page, request }) => {

test.setTimeout(200_000);

for (const snippet of snippets) {

// 1. Create snippet via POST

const response = await request.post(API_URL, {

data: {

title: snippet.title,

body: snippet.body,

language: snippet.language,

theme: snippet.theme,

expires_in: snippet.expiriesIn

}

});

expect(response.ok()).toBeTruthy();

const responseBody = await response.json();

const id = responseBody.id;

expect(id).toBeTruthy();

const fullUrl = `\({BASE_URL}\){id}`;

// 2. Run UI test using the generated URL

await runSteps({

page,

userFlow: `View snippet: ${snippet.title}`,

steps: [

{ description: `Navigate to ${fullUrl}` }

],

assertions: [

{ assertion: `Visually confirm you see ${snippet.assertion}` }

],

test,

expect

});

}

});

And when you run this test case, you get the following result.

$ npx playwright test --project chromium --ui

All tests have passed, but there's something off about this testing approach. As you may have noticed, we're integrating API calls into our Passmark testing. All the POST "/api/v1/paste/" steps I mean.

If the API fails → UI test never runs → Ultimately, test fails ❌

If UI is broken → test also fails ❌

That’s the real problem—not that it “fails”, but that it fails unclearly.

Separation of Concerns

I can think of two solutions in here. The simplest one is to separate the API test cases from the Passmark tests. Since our goal isn't to test API endpoints and Passmark is a Playwright puzzle, the best approach would be to use the setUp and tearDown methods (also known as beforeEach, beforeAll, afterEach, afterAll). This involves placing the API calls in the setUp phase to supply the IDs for the Passmark test case.

Here's how our test case would appear after refactoring:

test.describe("View code snippets", () => {

let snippetUrls: string[] = [];

test.beforeEach(async ({ request }) => {

snippetUrls = [];

for (const snippet of snippets) {

const response = await request.post(API_URL, {

data: {

title: snippet.title,

body: snippet.body,

language: snippet.language,

theme: snippet.theme,

expires_in: snippet.expiriesIn

}

});

// Treat API as setup, not the thing we're testing

if (!response.ok()) {

test.skip(true, "Setup failed: API unavailable");

}

const responseBody = await response.json();

const id = responseBody.id;

if (!id) {

test.skip(true, "Setup failed: No ID returned");

}

snippetUrls.push(`\({BASE_URL}\){id}`);

}

});

test("should display all snippets correctly", async ({ page }) => {

test.setTimeout(150_000);

for (let i = 0; i < snippets.length; i++) {

const snippet = snippets[i];

const url = snippetUrls[i];

await runSteps({

page,

userFlow: `View snippet: ${snippet.title}`,

steps: [

{ description: `Navigate to ${url}` }

],

assertions: [

{ assertion: snippet.assertion }

],

test,

expect

});

}

});

});

Fewer steps, cleaner results, more robust, and concise. This is how Passmark views the outcomes.

Test Case Improvements

Writing test cases always offers opportunities for improvement. For instance, transforming the entire setup process, including API calls, into a custom Playwright fixture or executing the tests in parallel could nearly triple the speed of the tests!

Furthermore, you could go much deeper into writing tests, creating well-maintained and robust test cases that thoroughly examine every aspect of the codebase.

Useful Links

Tests repository: https://github.com/collove/testing-pasteme/

PasteMe: https://pasteme.pythonanywhere.com (CLI)

Final Words

With the rise of LLMs and Gen AI, I expected something like Passmark to emerge. It's an impressive method for testing the UI and UX of websites. By using powerful prompts and a structured approach to detailing steps and smart assertions, testing can become even more thorough and detailed, ensuring the product is rigorously evaluated.

Integrating Passmark into the CI process could boost development reliability. I would utilize Passmark across the CI pipelines, given the Playwright's time-intensive nature.

It's an AI after all, it has limitations based on how it's prompted, so I wouldn't prioritize an LLM-powered testing tool over manually crafted tests. However, it's a valuable addition, yet could evaluate the potential bugs.

If You Are a Passmark Developer BTW..

Here is my perspective on using Passmark (1.0.12), which I think is important for further enhancements.

✅ - The development experience (DX) was solid. As a native Python developer, I found it pretty easy to craft and quite enjoyable.

✅ - I liked the Placeholder System. Expanding it to cover all types of data in all languages would be a good advantage.

✅ - The Snapshot Mechanism and OpenAI's CUA are remarkably clever, even when the prompt lacks clarity.

❌ - Lack of documentation.

❌ - Support for all popular LLMs.

❌ - Steps and Assertions need templates. Providing a set of prompt template examples would be ideal.

I truly appreciate this event for introducing me to Passmark. It was an exciting adventure to get it working and to write LLM-based test cases. 🍻